While powerful AI models can significantly enhance sales and retention efforts, their ‘black box’ nature poses considerable challenges. The move towards Explainable AI is crucial for fostering trust, ensuring ethical practices, and unlocking the full strategic potential of AI in these vital business domains.

XAI seeks to bridge the gap between the complex, often non-linear decision-making processes of advanced AI models and the human need for understanding, accountability, and control. It’s about empowering users not just to use AI, but to truly comprehend and collaborate with it. Platforms like Dynamiq make this shift accessible by combining explainability, guardrails, and observability into an all-in-one AI environment designed for both enterprises and SMEs.

Table of Contents

The ‘Black Box’ Problem in AI for Sales and Retention

The ‘black box’ problem in Artificial Intelligence refers to the phenomenon where the internal workings of an AI model are opaque, making it difficult for humans to understand how the AI arrived at a particular decision or prediction. This issue is particularly pertinent in critical business functions like sales and retention, where trust, accountability, and strategic insights are paramount.

What it means for Sales and Retention:

In sales, AI models are often used for lead scoring, predicting customer lifetime value, and recommending personalized sales strategies. For retention, AI might predict customer churn, suggest proactive interventions, or personalize customer service interactions. When these AI systems operate as ‘black boxes,’ several challenges arise:

1. Lack of Trust and Adoption: Sales and retention professionals may be hesitant to fully trust or adopt AI recommendations if they cannot understand the underlying logic. This can lead to underutilization of powerful AI tools.

2. Difficulty in Debugging and Improvement: If an AI model makes an incorrect or suboptimal decision (e.g., misidentifying a high-value lead or failing to predict churn for a key customer), it’s challenging to diagnose why the error occurred. This hinders the ability to refine the model or its training data.

3. Bias and Fairness Concerns: Opaque AI models can inadvertently perpetuate or amplify existing biases present in the training data. In sales and retention, this could lead to discriminatory practices in targeting customers or offering preferential treatment, raising ethical and legal concerns (e.g., GDPR compliance).

4. Limited Strategic Insight: A key benefit of data analysis is gaining insights to inform future strategies. Suppose the AI simply provides an answer without explaining its reasoning. In that case, businesses miss out on understanding the drivers behind customer behavior or sales success, limiting their ability to adapt and innovate.

5. Regulatory and Compliance Risks: As regulations around AI and data privacy evolve, businesses may be required to explain AI-driven decisions, especially those impacting individuals. A ‘black box’ model makes such compliance difficult.

The Need for Explainable AI (XAI):

To mitigate the ‘black box’ problem, the concept of Explainable AI (XAI) has gained significant traction. XAI aims to develop AI models that can provide human-understandable explanations for their outputs. In sales and retention, XAI can help by:

• Building Confidence: Sales teams can better trust and leverage AI insights if they understand the factors contributing to a lead score or churn prediction.

• Enabling Targeted Action: Knowing why a customer is likely to churn allows for more precise and effective retention strategies.

• Ensuring Fairness: XAI can help identify and mitigate biases, promoting equitable treatment of customers.

• Driving Business Strategy: Transparent AI models can reveal new correlations and insights, empowering businesses to make more informed strategic decisions beyond just following AI recommendations.

What is the Real Value of XAI?

The real value of Explainable AI (XAI) lies in transforming opaque AI systems into transparent, understandable, and trustworthy tools. This translates into several tangible benefits, especially in critical domains like sales and retention:

- 1. Enhanced Trust and Adoption: When users (sales teams, managers, customers) understand why an AI made a certain recommendation or prediction, they are more likely to trust and adopt its insights. This moves AI from a mysterious black box to a collaborative partner.

2. Improved Decision-Making: XAI provides insights into the factors influencing an AI’s output. For instance, in sales, understanding why a lead is scored high (e.g., specific website interactions, company size, industry trends) allows sales professionals to tailor their approach more effectively. In retention, knowing why a customer is at risk of churning (e.g., decreased product usage, recent support issues, competitor activity) enables targeted, proactive interventions.

3. Bias Detection and Mitigation: AI models can inadvertently learn and perpetuate biases present in their training data. XAI techniques help uncover these biases by revealing which features or patterns the model is relying on. This is crucial for ensuring fairness and ethical AI deployment, preventing discriminatory practices in customer targeting or service.

4. Debugging and Performance Improvement: When an AI model performs unexpectedly or makes errors, XAI helps pinpoint the root cause. By understanding the decision logic, developers and data scientists can identify problematic data, flawed features, or model misconfigurations, leading to more robust and accurate AI systems.

5. Regulatory Compliance and Accountability: As AI governance and data privacy regulations (like GDPR) become more stringent, organizations may be required to explain AI-driven decisions that impact individuals. XAI provides the necessary transparency to meet these compliance demands and establish clear accountability.

6. Strategic Insights and Innovation: Beyond just predictions, XAI can reveal hidden correlations and drivers within complex datasets. This deeper understanding can lead to novel strategic insights about customer behavior, market trends, and operational efficiencies, fostering innovation and competitive advantage.

And if you’re building solutions for clients rather than only adopting them internally, you may also find our guide on how to sell AI automation services helpful. It covers how startups and service providers can position, package, and monetise AI optimisation services in a competitive market.

What are the Technical Algorithms that Make Trust? (Key XAI Techniques)

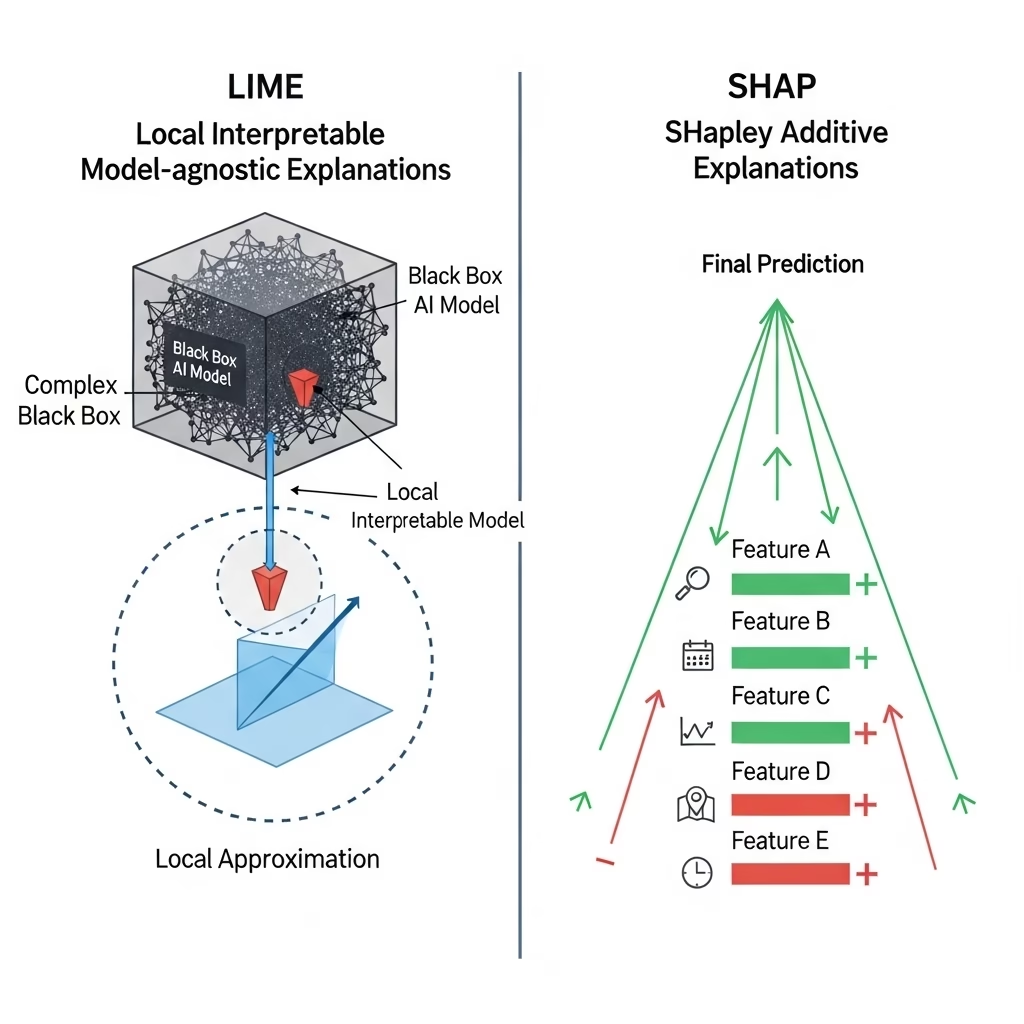

Building trust in AI often involves making its internal workings interpretable. This is achieved through various technical algorithms and methodologies, broadly categorized as Explainable AI (XAI) techniques. Two prominent and widely used model-agnostic techniques are LIME and SHAP:

1. LIME (Local Interpretable Model-agnostic Explanations):

Concept: LIME focuses on explaining individual predictions of any black-box machine learning model. It does this by approximating the complex model locally around the prediction with a simpler, interpretable model (like a linear regression or decision tree).

How it builds trust: For a specific prediction (e.g., why a particular customer is predicted to churn), LIME highlights the most influential features that contributed to that specific outcome. This local explanation helps users understand the immediate drivers of a single decision, making it more transparent and trustworthy.

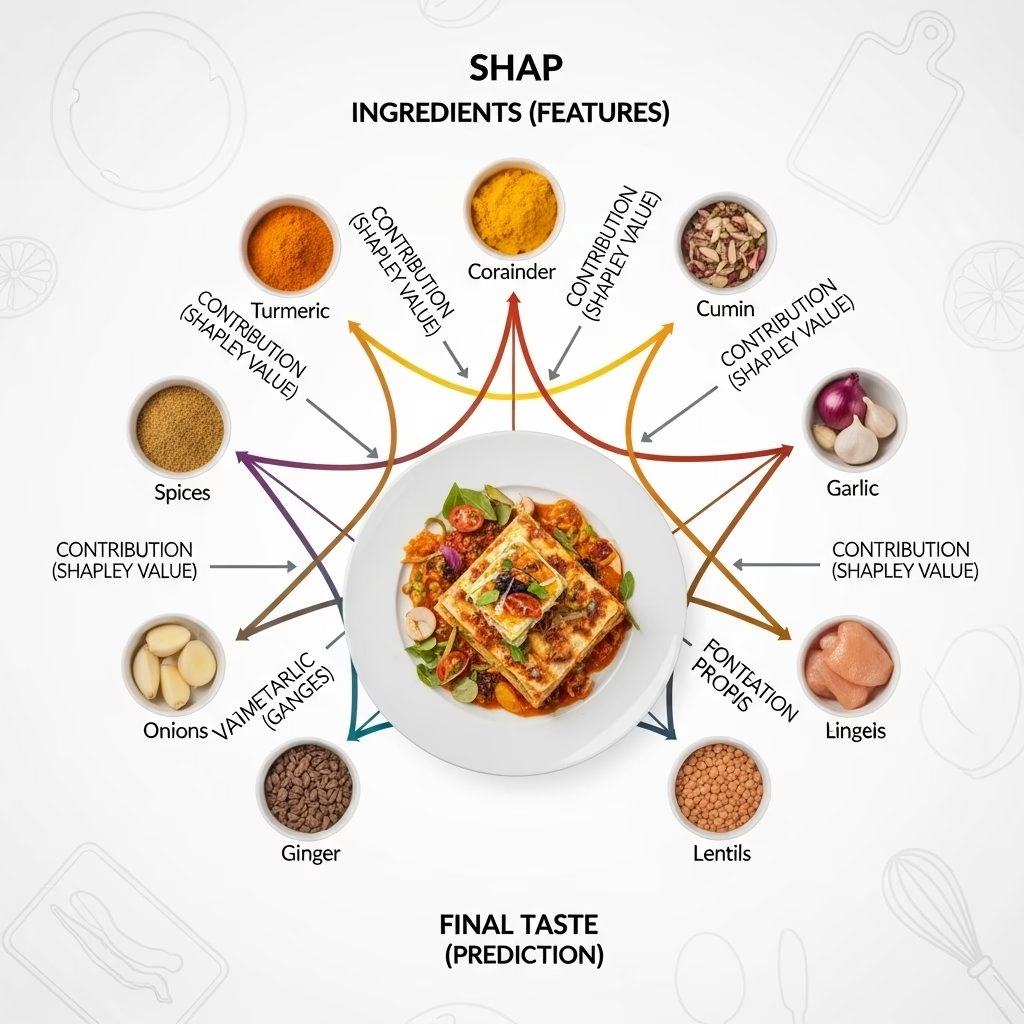

2. SHAP (SHapley Additive exPlanations):

Concept: SHAP is based on game theory, specifically the concept of Shapley values, which fairly distribute the contribution of each feature to the prediction. It provides a unified measure of feature importance.

How it builds trust: SHAP values explain how to derive the current model output from the base value (the average model output). It shows the impact of each feature on the prediction, considering all possible combinations of features. This provides both local (individual prediction) and global (overall model behavior) interpretability.

Other Techniques: Beyond LIME and SHAP, other XAI techniques include:

• Feature Importance: Simple methods that rank features by their contribution to model performance.

• Partial Dependence Plots (PDPs) and Individual Conditional Expectation (ICE) plots: Visualize the marginal effect of one or two features on the predicted outcome.

• Rule-based explanations: Extracting human-readable rules from complex models.

• Counterfactual Explanations: Identifying the smallest change to a data point that would alter the model’s prediction (e.g., “if the customer had done X instead of Y, they would not have churned”).

These algorithms build trust by providing transparency and interpretability, allowing users to verify the AI’s reasoning, identify potential flaws, and gain confidence in its outputs.

Leading Providers of Explainable AI (XAI) Solutions

Tech Giants & Consulting Firms

- Microsoft offers XAI tools within its Azure Machine Learning platform, focusing on model transparency, fairness, and accountability to support responsible AI deployment.

- IBM has been a long-standing contributor to the field, developing explainable AI frameworks to make model reasoning transparent and trustworthy.

- Salesforce and other major tech players are also increasingly integrating XAI into their enterprise solutions,

- Accenture offers AI-driven consulting and services that embed transparency, fairness, and explainable practices for enterprise clients.

- Oracle and SAP have begun incorporating explainability features into their cloud and enterprise platforms.

Dynamiq for Small and Medium Enterprises (SMEs)

If your company operates in the SME segment, you don’t need to compromise between advanced AI capabilities and budget efficiency. Dynamiq offers a flexible, enterprise-grade platform tailored not only for large corporations but also for small and medium enterprises looking to adopt AI responsibly and cost-effectively.

With Dynamiq, SMEs can:

- Deploy AI on-premise to keep full control over sensitive data while ensuring compliance with local and international regulations.

- Fine-tune large language models (LLMs) with just a few clicks, so even smaller teams can develop custom solutions without requiring a full in-house data science department.

- Integrate company-specific data using RAG, enhancing customer service, internal knowledge systems, or sector-specific applications.

- Monitor and evaluate AI performance through built-in observability dashboards, helping businesses quickly identify issues and optimise efficiency.

- Apply guardrails to ensure outputs remain precise, correct, and aligned with business expectations, which is crucial for SMEs operating in regulated industries.

The result is a streamlined, all-in-one AI development cycle that empowers SMEs to innovate, stay competitive, and adopt AI solutions at their own pace—without overspending on complex enterprise systems.

👉 If you’re ready to explore how Dynamiq can fit your SME’s needs, you can get started here: Dynamiq SME Offer.

Enterprise XAI Initiatives

- J.P. Morgan has established an Explainable AI Center of Excellence (XAI COE) to centralize research and best practices on explainability across financial services.

Hands-On XAI Tools & Libraries

- OmniXAI: Open-source Python library offering a unified interface for multiple explainable ML techniques (e.g., feature attribution, counterfactuals), supporting various data types and models.

- TrustyAI Explainability Toolkit: Java and Python library implementing XAI methods like LIME and SHAP with enterprise-ready decision services in mind.

- Widely-used frameworks like SHAP remain integral to explainable modeling, especially for feature impact analysis in model-agnostic scenarios.

Summary Table

| Type | Examples & Focus |

|---|---|

| Tech giants & consultancies | Microsoft (Azure ML), IBM, Accenture, Oracle, SAP — platforms & consulting |

| XAI-focused startups | Fiddler AI, Arthur AI, TruEra, Giskard, DataRobot, H2O.ai, etc. |

| Enterprise efforts | J.P. Morgan’s XAI Center of Excellence |

| Open-source tools | OmniXAI, TrustyAI Toolkit, SHAP libraries |

Recommended Next Steps for You

Align with regulations, especially if operating in areas with AI explainability mandates (finance, healthcare, GDPR, AI Act).

If your company is a small or medium enterprise, the most practical approach is to adopt an all-in-one solution that balances enterprise-grade performance with affordability and ease of use. This is exactly where Dynamiq stands apart. It gives SMEs the same power as large corporations, while remaining flexible, secure, and accessible to smaller teams.

👉 To explore how Dynamiq can support your business in adopting explainable AI with confidence, start here: Dynamiq SME Offer.

By beginning with a platform like Dynamiq, you can:

- Accelerate AI adoption without building everything in-house.

- Maintain compliance and security with on-premise deployment.

- Scale confidently, knowing your models are transparent, explainable, and fully under your control.

The next step is simple: test the platform, experience its capabilities, and see how quickly your business can move from AI experimentation to real-world impact.

Conclusion

Explainable AI is no longer a luxury; it is becoming a necessity for businesses that want to build trust, comply with regulations, and fully harness the potential of AI in sales, retention, and beyond. The ‘black box’ problem undermines adoption, but with XAI techniques like LIME, SHAP, and integrated guardrails, companies can finally understand and control their AI systems.

While large enterprises may turn to Microsoft, IBM, or specialized startups, SMEs need a practical, cost-effective solution. This is where Dynamiq provides unique value: a complete AI development platform that combines explainability, compliance, and customization in one place.

What is Explainable AI (XAI)?

Explainable AI refers to methods and tools that make AI models transparent and understandable. Instead of working like a “black box,” XAI helps businesses see why an AI made a decision, improving trust and accountability.

Why does XAI matter for sales and retention?

In sales and retention, decisions must be explainable: from why a lead is scored high to why a customer is flagged as a churn risk. XAI ensures teams trust AI recommendations, reduce bias, and meet compliance standards.

What are the most common XAI techniques?

Popular approaches include LIME (explains individual predictions), SHAP (shows feature contributions across models), and additional methods like feature importance, counterfactual explanations, and partial dependence plots.

Who provides XAI solutions?

Big providers include Microsoft, IBM, Salesforce, and Accenture. Startups like Fiddler AI, Arthur AI, TruEra, and Dynamiq focus on monitoring, explainability, and end-to-end AI development for both SMEs and enterprises. Open-source options include OmniXAI and TrustyAI.

What makes Dynamiq different from other providers?

Dynamiq is designed for SMEs as well as larger enterprises, offering an all-in-one platform: on-premise deployment, LLM fine-tuning, observability, guardrails, and RAG data integration. Instead of combining multiple tools, SMEs get a single, affordable, enterprise-grade solution.